Audit Red Flags in the Age of AI (What Tech CFOs Should Watch for in 2026)

Artificial Intelligence (AI) is now embedded into nearly every facet of financial operations, from automated journal entries and revenue recognition to real-time forecasting.

Naturally, companies in the technology industry are often some of the earliest adopters of new advancements, and many have long been integrating AI into their finance functions to increase efficiency, heighten accuracy and better support data-driven decision-making.

Yet, with innovation comes complexity and the potential for auditor scrutiny.

As AI becomes increasingly embedded in financial systems, today’s tech-sector CFOs face a new class of audit vulnerabilities. Revenue models are more dynamic than ever. Data is sprawling and decentralized. Automated processes can introduce hidden biases, create black-box dependencies and complicate audit trails. At the same time, audit standards are developing as regulators demand greater transparency and control.

For technology CFOs, the challenge is to leverage all of AI’s advantages while also maintaining audit integrity and defensibility.

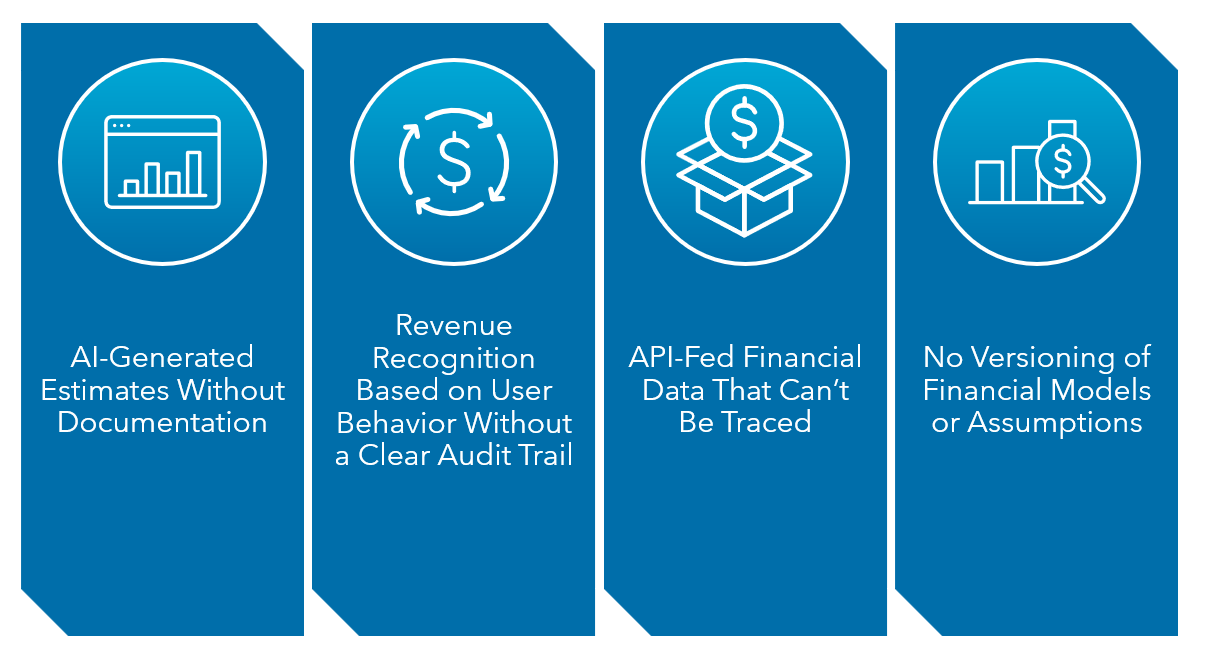

The Four Most Critical AI-Driven Audit Vulnerabilities in Tech Companies

The following four vulnerabilities are some significant red flags CFOs should watch for and proactively manage in the coming year.

1. AI-Generated Estimates Without Documentation

Many companies have started using AI models to generate financial estimates, including reserves, impairments and revenue forecasts.

In technology companies specifically, these models are often embedded in cloud-based forecasting platforms or integrated with product usage analytics, allowing finance teams to generate real-time estimates based on dynamic operational data.

These tools have their place. When properly implemented, they can significantly improve the speed, consistency and predictive accuracy of financial estimates (especially in fast-moving tech environments where traditional forecasting methods may lag behind actual performance). AI can detect patterns in historical data that might be missed by human analysts, reduce manual errors and help finance teams respond more quickly to changing market conditions.

However, these benefits also create challenges for auditors who need to be able to understand and validate the logic behind each estimate.

If you can’t clearly explain how an AI-driven number was derived or lack sufficient documentation for model inputs, assumptions and outputs, the results may be unauditable.

To avoid this issue, maintain transparent model documentation and governance protocols, ensuring you can justify every AI-generated estimate through a clear, defensible audit trail.

2. Revenue Recognition Based on User Behavior Without a Clear Audit Trail

As usage-based and consumption-driven pricing models become the norm in software-as-a-service (SaaS) and embedded fintech environments, many finance teams now rely on AI systems to determine when and how they recognize revenue.

These models use behavioral data, such as application programming interface (API) calls, transaction volume or active seat usage, to calculate revenue allocation automatically.

However, this introduces an audit challenge because auditors must be able to verify that you’ve recognized revenue in the correct accounting period and for the appropriate amount. When AI algorithms operate in systems that lack transparency or rely on data that hasn’t been properly verified, it becomes challenging to support revenue recognition with solid, auditable evidence.

If auditors can’t validate usage data and trace it back to source systems, they may determine revenue is misstated or unsupported.

To head off potential audit delays, ensure all usage inputs are timestamped, auditable and reconcilable to financial system entries. Implementing automated audit trails and periodic data integrity checks supports compliance and helps preserve investor confidence.

3. API-Fed Financial Data That Can’t Be Traced

In technology companies, APIs often connect product platforms, billing systems and cloud-based infrastructure directly to the general ledger.

For example, a SaaS company might use APIs to retrieve subscription usage data from its application into its ERP system, or a fintech platform might feed transaction-level data from its payment engine into its revenue reports.

This automation speeds up the close process and reduces manual errors, but it also creates challenges during an audit. Auditors need to confirm that the financial data is both accurate and complete. That becomes difficult when data flows through multiple APIs (especially those linking product environments, third-party services and internal databases) without clear records of where the data came from, when it was captured or how it was modified.

Ensure robust data governance and logging protocols to mitigate this risk. Every data transfer should include traceable metadata showing the data’s source, when you captured it and whether it changed before it was posted to the ledger.

4. No Versioning of Financial Models or Assumptions

AI-driven forecasting and valuation tools are widely used in tech companies to support dynamic planning, pricing and investment decisions. These tools continuously learn, refine and adjust their outputs based on new data. While this feature can improve predictive accuracy over time, it often results in a lack of version control and can create an audit blind spot.

Without formal versioning of models and underlying assumptions, it’s nearly impossible for auditors to compare current and prior iterations to evaluate reasonableness or identify material changes.

For example, say a machine learning model used for fair value measurement automatically updates its weighting algorithms without logging the changes. This makes it tough for auditors to determine whether market conditions or model logic led to a shift in valuation, and it undermines confidence in reported results.

To address this vulnerability, implement model governance protocols that include systematic versioning, archiving of all prior models and a formal log of changes to key assumptions. This historical record demonstrates consistency and audit transparency.

The Future of Audit Integrity in an AI-Driven Technology Company’s Finance Department

Artificial intelligence offers tremendous advantages to a finance team, but it doesn’t absolve CFOs of responsibility. It actually raises the bar.

As AI continues to influence how we generate, manipulate and report on financial data, auditors will expect clear documentation on the source of AI-derived numbers, evidence that estimates are reasonable and repeatable and the ability to trace every figure from its data source to the financial statements.

Most importantly, they will expect CFOs to explain the results, not just approve them.

To strengthen your audit readiness and establish a defensible AI governance framework, reach out to your Warren Averett advisor or ask a member of our team to reach out to you.